Most nonprofit leaders treat impact measurement like a tax audit: something you survive, not something you use. That mindset costs organizations their best strategic advantage. Impact measurement is really about collecting data to assess progress toward intended outcomes, enabling learning, strategy refinement, and continuous improvement. When you use it well, it sharpens your programs, builds funder trust, and keeps your mission honest. This article covers core concepts, proven frameworks, community and education applications, common pitfalls, and the mindset shift that separates high-performing nonprofits from the rest.

Table of Contents

- What is impact measurement and why does it matter?

- Core frameworks and methodologies in impact measurement

- Measuring community-driven change: approaches and challenges

- Impact measurement in education: benchmarks and capacity growth

- Common pitfalls and emerging debates in impact measurement

- A smarter approach: learning, adaptation, and context before compliance

- How YES Foundation can partner in your impact journey

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Focus on core outcomes | Prioritize a few vital metrics connected to your mission for meaningful evaluation. |

| Blend methods for depth | Use quantitative data alongside stories and self-assessment for a full impact picture. |

| Tailor frameworks to context | Select measurement methods that fit your program scale, community, and capacity. |

| Emphasize learning over compliance | Shift focus from box-ticking to continuous improvement and stakeholder engagement. |

| Avoid common pitfalls | Watch for mission drift, measurement overload, and one-size-fits-all solutions. |

What is impact measurement and why does it matter?

Impact measurement is the disciplined practice of tracking whether your programs are actually changing lives in the ways you intend. It goes beyond counting how many people attended a workshop or received a meal. It asks: Did anything meaningfully improve for the people you served? That distinction matters enormously for how you design programs, allocate resources, and tell your story to the world.

There are two very different reasons organizations measure impact. The first is compliance: funders require reports, boards want dashboards, and regulators need documentation. The second is learning: you want to know what works, fix what does not, and build something better over time. Most organizations do the first. The best ones prioritize the second.

Measurement enables learning, strategy refinement, and continuous improvement when it is treated as an internal tool rather than an external obligation. Organizations that embed this mindset into their culture adapt faster, attract stronger funding, and serve communities more effectively.

Here is why robust impact measurement benefits your nonprofit directly:

- Organizational learning: You identify what is working and why, so you can replicate success intentionally.

- Program adaptation: Real-time or regular data lets you course-correct before small problems become large failures.

- Stakeholder trust: Transparent reporting builds credibility with funders, community members, and board members alike.

- Funding proof: Documented outcomes make grant applications and donor pitches far more compelling.

- Mission alignment: Measurement keeps your team focused on the change you actually exist to create.

"The organizations that use measurement as a learning tool rather than a compliance exercise are the ones that consistently improve their programs and deepen their community impact." — Bridgespan Group

Think of impact measurement less like an annual report and more like a GPS. It does not just tell you where you have been. It tells you whether you are still heading where you meant to go.

Core frameworks and methodologies in impact measurement

Knowing why to measure is only half the battle. Knowing how to measure is where most organizations get stuck. Fortunately, several proven frameworks exist, each suited to different organizational contexts and program types.

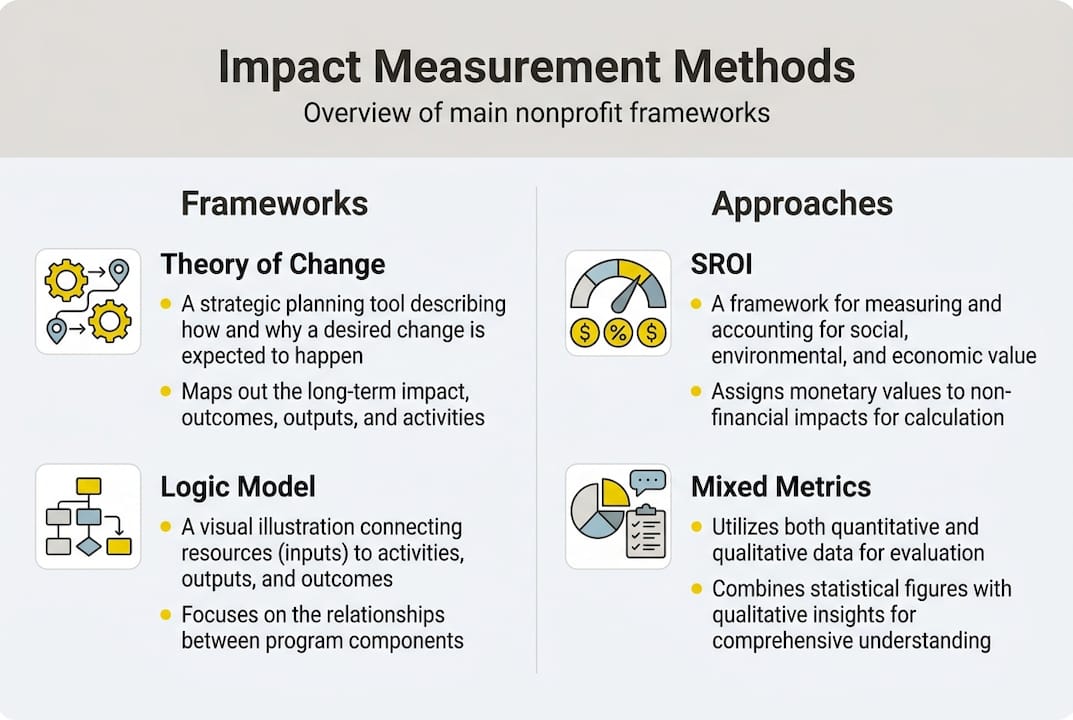

Theory of Change maps the causal pathway from your activities to your long-term goals. It forces you to articulate your assumptions and is especially useful for complex, multi-year initiatives. Logic Models are simpler visual tools that connect inputs, activities, outputs, and outcomes in a linear format. They work well for grant reporting and program planning. SROI (Social Return on Investment) quantifies social value in monetary terms, making it powerful for demonstrating value-for-money to funders who think in financial terms. Mixed methods combine quantitative data (numbers, rates, percentages) with qualitative data (interviews, stories, observations) for a fuller picture. Real-time dashboards give program managers ongoing visibility into key metrics.

Core methodologies include Theory of Change, Logic Models, SROI, mixed quantitative and qualitative metrics, and real-time dashboards, each serving a distinct evaluative purpose.

| Framework | When to use | Pros | Cons | Best-fit program type |

|---|---|---|---|---|

| Theory of Change | Long-term, complex initiatives | Clarifies assumptions | Time-intensive to build | Advocacy, systems change |

| Logic Model | Grant reporting, program design | Simple, visual | Can oversimplify | Direct service, education |

| SROI | Funder accountability | Monetizes social value | Requires strong data | Health, workforce dev |

| Mixed Methods | Holistic evaluation | Rich, nuanced findings | Resource-intensive | Community development |

| Real-time Dashboard | Ongoing program management | Immediate feedback | Needs data infrastructure | Any ongoing program |

A critical distinction: outputs are what you produce (number of students trained, meals served), while outcomes are the changes that result (students earning credentials, families achieving food security). Funders increasingly want outcomes, not just outputs.

Pro Tip: Resist the urge to measure everything. Pick a "vital few" metrics that directly connect to your mission and theory of change. Three meaningful indicators tracked consistently beat twenty scattered ones tracked poorly.

Measuring community-driven change: approaches and challenges

Community development work is where impact measurement gets genuinely complicated. You are often trying to measure things like shifts in community power, growth in local assets, or progress toward equity and inclusion. These are real, important changes, but they resist simple counting.

Effective community measurement requires assessing community-driven change through power, assets, and equity indicators, using self-assessments, storytelling, and diversified data sources to capture what numbers alone cannot.

| Indicator type | Sample measures | Sample tools |

|---|---|---|

| Community power | Resident leadership roles, decision-making participation | Surveys, meeting records |

| Asset growth | Local business starts, homeownership rates | Administrative data, interviews |

| Equity and inclusion | Demographic representation in programs | Disaggregated data, focus groups |

| Social cohesion | Trust scores, civic engagement rates | Validated survey scales |

Community initiatives come with unique measurement challenges that educational or direct-service programs do not always face:

- Change is often non-linear, with progress, setbacks, and unexpected pivots that standard metrics miss.

- Stakeholder diversity means different groups define success differently, and no single metric satisfies everyone.

- Attribution is hard: when a neighborhood improves, was it your program, the economy, or a dozen other factors?

- Long timelines mean your measurement window may not capture the full effect of your work.

- Power imbalances can distort data when community members feel pressure to report positive results.

Pro Tip: Pair self-assessment tools and community storytelling with your quantitative data. Numbers tell you what changed. Stories tell you why it mattered and how it felt to the people you serve. Both are necessary for honest evaluation.

Impact measurement in education: benchmarks and capacity growth

In the education sector, impact measurement has a long track record of driving real organizational growth. When you know which benchmarks matter, you can build programs that move the needle and prove it clearly.

Common educational impact benchmarks vary by student age and program type. For K-12 youth development, key markers include third-grade reading proficiency, eighth-grade math performance, A-G course completion for college eligibility, and post-secondary enrollment and success rates. Education benchmarks include 3rd grade literacy, 8th grade math, A-G completion, and post-secondary success, with consistent measurement building both programmatic capacity and organizational reach.

Here is a practical sequence for implementing a measurement plan in an educational program:

- Identify your intended outcomes tied directly to your theory of change and the student population you serve.

- Select a vital few metrics that are measurable, meaningful, and actionable within your program's scope.

- Build data collection systems that are simple enough for program staff to use consistently without burnout.

- Analyze data regularly, not just at year-end, so you can adapt curriculum or delivery mid-program.

- Use findings to adapt, sharing results with staff, students, and funders to close the learning loop.

The payoff for this kind of disciplined measurement is significant. Organizations that commit to outcome tracking report dramatic improvements across multiple dimensions: cost reductions of around 18%, revenue increases of up to 114%, and program reach growth as high as 125%. These are not abstract possibilities. They reflect what happens when nonprofits treat measurement as a strategic tool rather than a reporting burden.

Scaling effective educational programs depends on this evidence base. Without clear outcome data, you cannot identify which program components drive results, which means you cannot replicate success at larger scale with confidence.

Common pitfalls and emerging debates in impact measurement

Even well-intentioned organizations make costly measurement mistakes. Knowing the most common traps helps you avoid them before they distort your strategy or drain your team's capacity.

The most frequent mistake is metric overload: tracking so many indicators that staff spend more time collecting data than delivering programs. Another common error is misusing Randomized Controlled Trials (RCTs) as a default gold standard. RCTs face critique for high costs, lack of context sensitivity, and most nonprofit RCTs fail to meet rigorous standards in practice. They can also freeze innovation by requiring stable, unchanging program designs during evaluation periods.

"The 'gold standard' framing of RCTs has pushed many nonprofits toward expensive, rigid evaluations that serve funder optics more than organizational learning. Mixed methods, stakeholder voice, and continuous feedback loops often generate more useful insights." — Stanford Social Innovation Review

Mixed methods, participant feedback, and continuous learning outperform one-shot RCTs for nonprofits that need to balance rigor with innovation and genuine stakeholder engagement.

Watch for these red flags in your own measurement practice:

- Mission drift: You start measuring what funders want instead of what your community needs.

- Power imbalances: Evaluation is done to communities rather than with them.

- Abstract vs. practical gaps: Your metrics look good on paper but do not reflect real-world change.

- Ignoring community values: Data collection excludes the cultural context that gives results meaning.

- Compliance theater: Reports are polished and positive, but internal learning is minimal.

The goal is not a perfect evaluation design. The goal is meaningful improvement in the lives of the people you serve. Keep that as your north star when choosing what and how to measure.

A smarter approach: learning, adaptation, and context before compliance

Here is the uncomfortable truth most measurement consultants will not tell you: many nonprofits are measuring a lot and learning very little. They have dashboards, annual reports, and logic models. They also have programs that have not meaningfully evolved in years.

The problem is not the tools. It is the mindset. When compliance drives measurement, you optimize for looking good rather than getting better. Measurement drives improvement only when it is learning-focused, but it risks mission drift when compliance becomes the primary motivation. Stakeholder involvement is not optional; it is what makes findings trustworthy and useful.

The nonprofits we have seen grow their impact most dramatically are the ones that treat a failed program as a data point, not a scandal. They ask hard questions of their own work. They involve the people they serve in defining what success looks like. They use context-driven, adaptable approaches instead of rigid templates imported from a sector that does not share their reality.

Count less. Learn more. Evaluate what matters to the people you serve, not just what satisfies a grant report. That shift is harder than it sounds, but it is where real impact lives.

How YES Foundation can partner in your impact journey

You now have a clear picture of what strong impact measurement looks like, from choosing the right framework to avoiding the compliance trap. The next step is putting it into practice within your own organization.

At YES Foundation, we work alongside nonprofit leaders and program evaluators to design measurement strategies that are mission-aligned, community-centered, and built for real-world use. Whether you are launching your first evaluation plan or refining an existing system, our resources and guidance are designed to help you measure what matters, communicate your outcomes with confidence, and scale what works. Reach out to explore how we can support your impact measurement goals and help your programs create the generational change your community deserves.

Frequently asked questions

What is the difference between outputs and outcomes in impact measurement?

Outputs are the immediate products of your program activities, like the number of workshops held or students enrolled, while outcomes are the actual changes in knowledge, behavior, or conditions that result. Core methodologies map outputs and outcomes distinctly, with impact being the ultimate goal.

How do you choose the right impact measurement framework?

Select a framework based on your program goals, organizational capacity, funder requirements, and input from the communities you serve. The best-fit framework depends on context, program size, and stakeholder needs, and mixing quantitative and qualitative approaches often yields the most actionable results.

Why are Randomized Controlled Trials (RCTs) controversial in social sector evaluation?

RCTs are expensive, often context-specific, and can limit a program's ability to adapt and innovate during the evaluation period. RCTs face critique for high costs, lack of context sensitivity, and limited value for innovation in the sector.

What steps can a small nonprofit take to start measuring impact effectively?

Start by identifying two or three mission-critical outcomes, then gather both numbers and community stories, and involve stakeholders early so your measurement reflects real priorities. Start with vital few outcomes tied to your theory of change and build from there as your capacity grows.